Archive for the ‘Plan Stability’ Category

Query to get SQL_ID from DBA_SQL_PLAN_BASELINES

Doing a SQL Plan Baseline fleet cleanup, I wanted to get the SQL_ID give a SQL Plan Baseline. Long ago I wrote a PL/SQL function that inputs some SQL Text and outputs its SQL_ID . Then, I could use such function, passing the SQL Text from the SQL Plan Baseline (this is on 12c), and getting back the SQL_ID I need!

I tested this query below on 12.1.0.2, and it gives me exactly what I wanted: Input a SQL Plan Baseline, and output its SQL_ID. And all from a simple SQL query. Enjoy it! 🙂

/* from https://carlos-sierra.net/2013/09/12/function-to-compute-sql_id-out-of-sql_text/ */ /* based on http://www.slaviks-blog.com/2010/03/30/oracle-sql_id-and-hash-value/ */ WITH FUNCTION compute_sql_id (sql_text IN CLOB) RETURN VARCHAR2 IS BASE_32 CONSTANT VARCHAR2(32) := '0123456789abcdfghjkmnpqrstuvwxyz'; l_raw_128 RAW(128); l_hex_32 VARCHAR2(32); l_low_16 VARCHAR(16); l_q3 VARCHAR2(8); l_q4 VARCHAR2(8); l_low_16_m VARCHAR(16); l_number NUMBER; l_idx INTEGER; l_sql_id VARCHAR2(13); BEGIN l_raw_128 := /* use md5 algorithm on sql_text and produce 128 bit hash */ SYS.DBMS_CRYPTO.hash(TRIM(CHR(0) FROM sql_text)||CHR(0), SYS.DBMS_CRYPTO.hash_md5); l_hex_32 := RAWTOHEX(l_raw_128); /* 32 hex characters */ l_low_16 := SUBSTR(l_hex_32, 17, 16); /* we only need lower 16 */ l_q3 := SUBSTR(l_low_16, 1, 8); /* 3rd quarter (8 hex characters) */ l_q4 := SUBSTR(l_low_16, 9, 8); /* 4th quarter (8 hex characters) */ /* need to reverse order of each of the 4 pairs of hex characters */ l_q3 := SUBSTR(l_q3, 7, 2)||SUBSTR(l_q3, 5, 2)||SUBSTR(l_q3, 3, 2)||SUBSTR(l_q3, 1, 2); l_q4 := SUBSTR(l_q4, 7, 2)||SUBSTR(l_q4, 5, 2)||SUBSTR(l_q4, 3, 2)||SUBSTR(l_q4, 1, 2); /* assembly back lower 16 after reversing order on each quarter */ l_low_16_m := l_q3||l_q4; /* convert to number */ SELECT TO_NUMBER(l_low_16_m, 'xxxxxxxxxxxxxxxx') INTO l_number FROM DUAL; /* 13 pieces base-32 (5 bits each) make 65 bits. we do have 64 bits */ FOR i IN 1 .. 13 LOOP l_idx := TRUNC(l_number / POWER(32, (13 - i))); /* index on BASE_32 */ l_sql_id := l_sql_id||SUBSTR(BASE_32, (l_idx + 1), 1); /* stitch 13 characters */ l_number := l_number - (l_idx * POWER(32, (13 - i))); /* for next piece */ END LOOP; RETURN l_sql_id; END compute_sql_id; SELECT compute_sql_id(sql_text) sql_id, signature FROM dba_sql_plan_baselines /

Scripts to deal with SQL Plan Baselines, SQL Profiles and SQL Patches

To mitigate SQL performance issues, I do make use of SQL Plan Baselines, SQL Profiles and SQL Patches, on a daily basis. Our environments are single-instance 12.1.0.2 CDBs, with over 2,000 PDBs. Our goal is Execution Plan Stability and consistent performance, over CBO plan flexibility. The CBO does a good job, considering the complexity imposed by current applications design. Nevertheless, some SQL require some help in order to enhance their plan stability.

I have written and shared a set of scripts that simply make the use of a bunch of APIs a lot easier, with better documented actions, and fully consistent within the organization. I have shared with the community these scripts in the past, and I keep them updated as per needs change. All these “CS” scripts are available under the download section on the right column.

Current version of the CS scripts is more like a toolset. You treat them as a whole. All of them call some other script that exists within the cs_internal subdirectory, then I usually navigate to the parent sql directory, and connect into SQL*Plus from there. All these scripts can be easily cloned and/or customized to your specific needs. They are available as “free to use” and “as is”. There is no requirement to keep their heading intact, so you can reverse-engineer them and make them your own if you want. Just keep in mind that I maintain, enhance, and extend this CS toolset every single day; so what you get today is a subset of what you will get tomorrow. If you think an enhancement you need (or a fix) is beneficial to the larger community (and to you), please let me know.

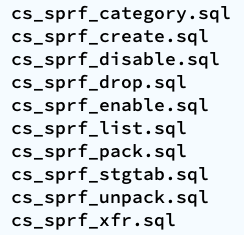

SQL Plan Baselines scripts

With the set of SQL Plan Baselines scripts, you can: 1) create a baseline based on a cursor or a plan stored into AWR; 2) enable and disable baselines; 3) drop baselines; 4) store them into a local staging table; 5) restore them from their local staging table; 6) promote as “fixed” or demote from “fixed”; 7) “zap” them if you have installed “El Zapper” (iod_spm).

Note: “El Zapper” is a PL/SQL package that extends the functionality of SQL Plan Management by automagically creating SQL Plan Baselines based on proven performance of a SQL statement over time, while considering a large number of executions, and a variety of historical plans. Please do not confuse “El Zapper” with auto-evolve of SPM. They are based on two very distinct premises. “El Zapper” also monitors the performance of active SQL Plan Baselines, and during an observation window it may disable a SQL Plan Baseline, if such plan no longer performs as “promised” (according to some thresholds). Most applications do not need “El Zapper”, since the use of SQL Plan Management should be more of an exception than a rule.

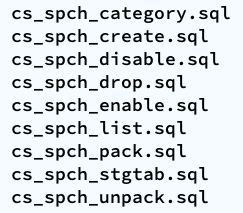

SQL Profiles scripts

With the set of SQL Profiles scripts, you can: 1) create a profile based on the outline of a cursor, or from a plan stored into AWR; 2) enable and disable profiles; 3) drop profiles; 4) store them into a local staging table; 5) restore them from their local staging table; 6) transfer them from one location to another (very similar to coe_xfr_sql_profile.sql, but on a more modular way).

Note: Regarding the transfer of a SQL Profile, the concept is simple: 1) on source location generate two plain text scripts, one that contains the SQL text, and a second that includes the Execution Plan (outline); 2) execute these two scripts on a target location, in order to create a SQL Profile there. The beauty of this method is not only that you can easily move Execution Plans between locations, but that you can actually create a SQL Profile getting the SQL Text from SQL_ID “A”, and the Execution Plan from SQL_ID “B”, allowing you to do things like: removing CBO Hints, or using a plan from a similar SQL but not quite the same (e.g. I can tweak a stand-alone cloned version of a SQL statement, and once I get the plan that I need, I associate the SQL Text from the original SQL, with the desired Execution Plan out of the stand-alone customized version of the SQL, after that I create a SQL Plan Baseline and drop the staging SQL Profile).

SQL Patches scripts

With the set of SQL Patches scripts, you can: 1) create a SQL patch based on one or more CBO Hints you provide (e.g.: GATHER_PLAN_STATISTICS MONITOR FIRST_ROWS(1) OPT_PARAM(‘_fix_control’ ‘5922070:OFF’) NO_BIND_AWARE); 2) enable and disable SQL patches; 3) drop SQL patches; 4) store them into a local staging table; 5) restore them from their local staging table.

Note: I use SQL Patches a lot, specially to embed CBO Hints that generate some desirable diagnostics details (and not so much to change plans), such as the ones provided by GATHER_PLAN_STATISTICS and MONITOR. In some cases, after I use the pathfinder tool written by Mauro Pagano, I have to disable a CBO patch (funny thing: I use a SQL Patch to disable a CBO Patch!). I also use a SQL Patch if I need to enable Adaptive Cursor Sharing (ACS) for one SQL (we disabled ACS for one major application). Bear in mind that SQL Plan Baselines, SQL Profiles and SQL Patches happily co-exist, so you can use them together, but I do prefer to use SQL Plan Baselines alone, whenever possible.

Adapting and adopting SQL Plan Management (SPM)

Introduction

This post is about: “Adapting and adopting SQL Plan Management (SPM) to achieve execution plan stability for sub-second queries on a high-rate OLTP mission-critical application”. In our case, such an application is implemented on top of several Oracle 12c multi tenant databases, where a consistent average execution time is more valuable than flexible execution plans. We successfully achieved plan stability implementing a simple algorithm using PL/SQL calling DBMS_SPM public APIs.

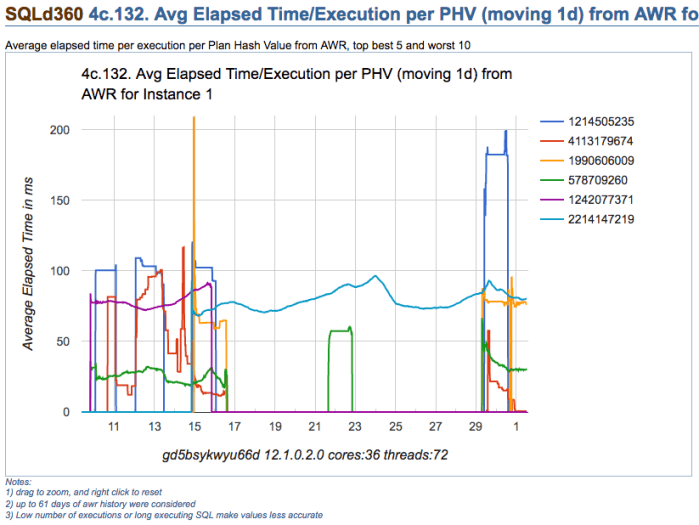

Chart below depicts a typical case where the average performance of a large set of business-critical SQL statements suddenly degraded from sub-millisecond to 15 or 20ms, then beccome more stable around 3ms. Wide spikes are a typical trademark of an Execution Plan for one or more SQL statements flipping for some time. In order to produce a more consistent latency we needed to improve plan stability, and of course the preferred tool to achieve that on an Oracle database is SQL Plan Management.

Algorithm

We tested and ruled out adaptive SQL Plan Management, which is an excellent 12c new feature. But, due to the dynamics of this application, where transactional data shifts so fast, allowing this “adaptive SPM” feature to evaluate auto-captured plans using bind variable values captured a few hours earlier, rendered unfortunately false positives. These false positives “evolved” as execution plans that were numerically optimal for values captured (at the time the candidate plan was captured), but performed poorly when executed on “current” values a few hours later. Nevertheless, this 12c “adaptive SPM” new feature is worth exploring for other applications.

We adapted SPM so it would only generate SQL Plan Baselines on SQL that executes often, and that is critical for the business. The algorithm has some complexity such as candidate evaluation and SQL categorization; and besides SPB creation it also includes plan demotion and plan promotion. We have successfully implemented it in some PDBs and we are currently doing a rollout to entire CDBs. The algorithm is depicted on diagram on the left, and more details are included in corresponding presentation slides listed on the right-hand bar. I plan to talk about this topic on an international Oracle Users Group in 2018.

We adapted SPM so it would only generate SQL Plan Baselines on SQL that executes often, and that is critical for the business. The algorithm has some complexity such as candidate evaluation and SQL categorization; and besides SPB creation it also includes plan demotion and plan promotion. We have successfully implemented it in some PDBs and we are currently doing a rollout to entire CDBs. The algorithm is depicted on diagram on the left, and more details are included in corresponding presentation slides listed on the right-hand bar. I plan to talk about this topic on an international Oracle Users Group in 2018.

This algorithm is scripted into a sample PL/SQL package, which you can find on a subdirectory on my shared scripts. If you consider using this sample script for an application of your own, be sure you make it yours before attempting to use it. In other words: fully understand it first, then proceed to customize it accordingly and test it thoroughly.

Results

Chart below shows how average performance of business-critical SQL became more stable after implementing algorithm to adapt and adopt SPM on a pilot PDB. Not all went fine although: we had some outliers that required some tuning to the algorithm. Among challenges we faced: volatile data (creating a SPB when table was almost empty, then using it when table was larger); skewed values (create a SPB for non-popular value, then using it on a popular value); proper use of multiple optimal plans due to Adaptive Cursor Sharing (ACS); rejected candidates due to conservative initial restrictions on algorithm (performance per execution, number of executions, age of cursor, etc.)

Conclusion

If your OLTP application contains business critical SQL that executes at a high-rate, and where a spike on latency risks affecting SLAs, you may want to consider implementing SQL Plan Management. Consider then both: “adaptive SPM” if it satisfies your requirements, else build a PL/SQL library that can implement more complex logic for candidates evaluation and for SPBs maintenance. I do believe SPM works great, specially when you enhance its out-of-the-box functionality to satisfy your specific needs.

Creating a SQL Plan Baseline from Cursor Cache or AWR

A DBA deals with performance issues often, and having a SQL suddenly performing poorly is common. What do we do? We proceed to “pin” an execution plan, then investigate root cause (the latter is true if time to next fire permits).

DBMS_SPM provides some APIs to create a SQL Plan Baseline (SPB) from the Cursor Cache, or from a SQL Tuning Set (STS), but not from the Automatic Workload Repository (AWR). For the latter, you need a two-steps approach: create a STS from AWR, then load a SPB from the STS. Fine, except when your next fire is waiting for you, or when deciding which is the “best” plan is not trivial.

Take for example chart below, which depicts multiple execution plans with different performance for one SQL statement. The SQL statement is actually quite simple, and data is not significantly skewed. On this particular application, usually one-size-fits-all (meaning one-and-only-one plan) works well for most values passed on variable place holders. Then, which plan would you choose?

Note: please get all scripts using the download column on the right

Looking at summary of known Execution Plans’ performance below (as reported by planx.sql), we can see the same 6 Execution Plans.

1st Plan on list shows an average execution time of 2.897ms according to AWR, and 0.896ms according to Cursor Cache; and number of recorded executions for this Plan are 2,502 and 2,178 respectively. We see this Plan contains one Nested Loop, and if we look at historical performance we notice this Plan takes less than 109ms 95% of the time, less than 115ms 97% of the time, and less then 134ms 99% of the time. We also see that worst recorded AWR period, had this SQL performing in under 150ms (on average for that one period).

We also notice that last plan on list performs one execution in 120.847ms on average (as per AWR) and 181.113ms according to Cursor Cache (on average as well). Then, “pinning” 1st plan on list seems like a good choice, but not too different than all but last plan, specially when we consider both: average performance and historical performance according to percentiles reported.

PLANS PERFORMANCE

~~~~~~~~~~~~~~~~~

Plan ET Avg ET Avg CPU Avg CPU Avg BG Avg BG Avg Executions Executions ET 100th ET 99th ET 97th ET 95th CPU 100th CPU 99th CPU 97th CPU 95th

Hash Value AWR (ms) MEM (ms) AWR (ms) MEM (ms) AWR MEM AWR MEM MIN Cost MAX Cost NL HJ MJ Pctl (ms) Pctl (ms) Pctl (ms) Pctl (ms) Pctl (ms) Pctl (ms) Pctl (ms) Pctl (ms)

----------- ----------- ----------- ----------- ----------- ------------ ------------ ------------ ------------ ---------- ---------- --- --- --- ----------- ----------- ----------- ----------- ----------- ----------- ----------- -----------

4113179674 2.897 0.896 2.715 0.714 96 5 2,502 2,178 8 738 1 0 0 149.841 133.135 114.305 108.411 147.809 133.007 113.042 107.390

578709260 29.576 32.704 28.865 31.685 1,583 1,436 6,150 1,843 67 875 1 0 0 154.560 84.264 65.409 57.311 148.648 75.209 62.957 56.305

1990606009 74.399 79.054 73.163 77.186 1,117 1,192 172 214 905 1,108 0 1 0 208.648 208.648 95.877 95.351 205.768 205.768 94.117 93.814

1242077371 77.961 77.182 1,772 8,780 949 1,040 0 1 0 102.966 98.206 91.163 89.272 100.147 97.239 90.165 88.412

2214147219 79.650 82.413 78.242 80.817 1,999 2,143 42,360 24,862 906 1,242 0 1 0 122.535 101.293 98.442 95.737 119.240 99.118 95.266 93.156

1214505235 120.847 181.113 105.485 162.783 506 1,355 48 12 114 718 1 0 0 285.950 285.950 285.950 285.950 193.954 193.954 193.954 193.954

Plans performance summary above is displayed in a matter of seconds by planx.sql, sqlperf.sql and by a new script spb_create.sql. This output helps make a quick decision about which Execution Plan is better for “pinning”, meaning: to create a SPB on it.

Sometimes such decision is not that trivial, as we can see on sample below. Which plan is better? I would go with 2nd on list. Why? performance-wise this plan is more stable. It does a Hash Join, so I am expecting to see a Plan with full scans, but if I can get consistent executions under 0.4s (according to percentiles), I would be tempted to “pin” this 2nd Plan instead of 1st one. And I would stay away from 3rd and 5th. So maybe I would create a SPB with 3 plans instead of just one, and include on this SPB 1st, 2nd and 4th on the list.

PLANS PERFORMANCE

~~~~~~~~~~~~~~~~~

Plan ET Avg ET Avg CPU Avg CPU Avg BG Avg BG Avg Executions Executions ET 100th ET 99th ET 97th ET 95th CPU 100th CPU 99th CPU 97th CPU 95th

Hash Value AWR (ms) MEM (ms) AWR (ms) MEM (ms) AWR MEM AWR MEM MIN Cost MAX Cost NL HJ MJ Pctl (ms) Pctl (ms) Pctl (ms) Pctl (ms) Pctl (ms) Pctl (ms) Pctl (ms) Pctl (ms)

----------- ----------- ----------- ----------- ----------- ------------ ------------ ------------ ------------ ---------- ---------- --- --- --- ----------- ----------- ----------- ----------- ----------- ----------- ----------- -----------

1917891576 0.467 0.334 0.330 0.172 119 33 554,914,504 57,748,249 6 1,188 2 0 0 6,732.017 10.592 1.628 1.572 1,420.864 1.557 1.482 1.261

99953997 1.162 2.427 0.655 0.492 83 55 58,890,160 2,225,247 12 2,311 0 1 0 395.819 235.474 108.142 34.909 56.008 22.329 12.926 3.069

3559532534 1.175 1,741.041 0.858 91.486 359 46 21,739,877 392 4 20 1 0 0 89,523.768 4,014.301 554.740 298.545 21,635.611 216.456 54.050 30.130

3650324870 2.028 20.788 1.409 2.257 251 199 24,038,404 143,819 11 5,417 0 1 0 726.964 254.245 75.322 20.817 113.259 21.211 13.591 8.486

3019880278 43.465 43.029 20,217 13,349 5,693 5,693 0 1 0 43.465 43.465 43.465 43.465 43.029 43.029 43.029 43.029

About new script spb_create.sql

Update: Scripts to deal with SQL Plan Baselines, SQL Profiles and SQL Patches

This new script is a life-saver for us, since our response time for an alert is usually measured in minutes, with a resolution (and sometimes a root cause analysis) expected in less than one hour from the time the incident is raised.

This script is quite simple:

- it provides a list of known Execution Plans including current (Cursor Cache) and historical (AWR) performance as displayed in two samples above, then

- asks on which Plan Hash Values (PHVs) you want to create a SPB on. It allows you to enter up to 3 PHVs; last

- asks if you want these plans to be set as FIXED

After you respond to ACCEPT parameters, then a SPB for your SQL is created and displayed. It does not matter if the Plan exists on Cursor Cache and/or on AWR, it finds the Plan and creates the SPB for you. Then: finding known Execution Plans, deciding which one is a better choice (or maybe more than one), and creating a SPB, all can be done very rapidly.

If you still prefer to use SQL Profiles and not SPBs for whatever reason, script coe_xfr_sql_profile.sql is still around and updated. On these 12c days, and soon 18c and beyond, I’d much rather use SQL Plan Management and create SPBs although!

Anyways, enjoy these free scripts and become a faster hero “pinning” good plans. Then don’t forget to do diligent root cause analysis afterwards. I use SQLd360 by Mauro Pagano for deep understanding of what is going on with my SQL statements.

Soon, I will post about a cool free tool that automates the implementation of SQL Plan Management on a high-rate OLTP where stability is more important than flexibility (frequently changing Execution Plans). Stay tuned!

Note: please get all scripts using the download column on the right

Purging a cursor in Oracle – revisited

A few years ago I created a post about “how to flush a cursor out the shared pool“, using DBMS_SHARED_POOL.PURGE. For the most part, this method has helped me to get rid of an entire parent cursor and all child cursors for a given SQL, but more often than not I have found than on 12c this method may not work, leaving active a set of cursors I want to flush.

Script below is an enhanced version, where besides using DBMS_SHARED_POOL.PURGE, we also create a dummy SQL patch, then drop it. This method seems to completely flush parent and child cursors. Why using this method instead?: We are implementing SQL Plan Management (SPM), and we have found that in some cases, some child cursors are still shared several hours after a SQL Plan Baseline (SPB) is created. We could argue a possible bug and pursue as such, but in the meantime my quick and dirty workaround is: whenever I want to flush an individual parent cursor for one SQL, and all of its child cursors, I just execute script below passing SQL_ID.

Anyways, just wanted to share and document this purge_cursor.sql script for those in similar need. I have developed it on 12.1.0.2, and haven’t tested it on lower or higher versions.

-- purge_cursor.sql DECLARE l_name VARCHAR2(64); l_sql_text CLOB; BEGIN -- get address, hash_value and sql text SELECT address||','||hash_value, sql_fulltext INTO l_name, l_sql_text FROM v$sqlarea WHERE sql_id = '&&sql_id.'; -- not always does the job SYS.DBMS_SHARED_POOL.PURGE ( name => l_name, flag => 'C', heaps => 1 ); -- create fake sql patch SYS.DBMS_SQLDIAG_INTERNAL.I_CREATE_PATCH ( sql_text => l_sql_text, hint_text => 'NULL', name => 'purge_&&sql_id.', description => 'PURGE CURSOR', category => 'DEFAULT', validate => TRUE ); -- drop fake sql patch SYS.DBMS_SQLDIAG.DROP_SQL_PATCH ( name => 'purge_&&sql_id.', ignore => TRUE ); END; /

Poor’s man script to summarize reasons WHY cursors are not shared

Having a large number of child cursors can affect parsing performance as hinted by Abel Macias on his blog post about Diagnosis of a High Version Count (HVC). On his post, Abel also refers to a note on MOS which includes a script that dives into the reasons WHY our cursors are not getting shared. Then, for deep-dives in this area, I strongly suggest to read his post and use the referenced script provided at MOS.

Besides longer parse times, and potential library cache contention, manifested by some waits (such as on mutex), there is another side effect that may bite us: CBO may produce a different plan when a SQL statement is hard-parsed while creating a new child cursor. This latter side effect can be critical for transactional applications with SLA depending on very short latencies of some queries.

This post is about a poor’s man script, that with no installation whatsoever, it lists an aggregated summary of the reasons why our cursors are not shared, including child cursor counts and distinct SQL_IDs counts for each reason (see sample output below). I had to write such script since in our environments we cannot simply run diagnostics scripts that create objects in the database, such as the one provided by MOS.

CURSORS SQL_IDS REASON_NOT_SHARED

---------- ---------- -----------------------------

226916 7826 ROLL_INVALID_MISMATCH

29387 105 BIND_EQUIV_FAILURE

21794 4027 HASH_MATCH_FAILED

11588 2134 OPTIMIZER_MISMATCH

11027 413 BIND_LENGTH_UPGRADEABLE

11008 384 BIND_MISMATCH

10125 2697 USE_FEEDBACK_STATS

4540 109 OPTIMIZER_MODE_MISMATCH

1652 72 PURGED_CURSOR

1245 81 BIND_UACS_DIFF

1062 316 LANGUAGE_MISMATCH

771 103 LOAD_OPTIMIZER_STATS

500 52 STATS_ROW_MISMATCH

238 86 MV_QUERY_GEN_MISMATCH

94 3 MULTI_PX_MISMATCH

28 4 AUTH_CHECK_MISMATCH

23 1 INSUFF_PRIVS

Once I get to see some reasons for not sharing, some responsible for a large number of child cursors (and distinct SQL_IDs), then I can search on MOS as Abel suggested. Ideally, if you are interested in plan stability, you may want to reduce the times the CBO is tasked to create a new child cursor (and potentially a new Execution Plan).

In output sample above, top in our list is ROLL_INVALID_MISMATCH, causing 226,916 child cursors in as many as 7,826 SQL statements. This particular reason for not sharing cursors is due to a persistent gathering of schema object statistics with the explicit request to invalidate cursors. Since we want to promote plan stability, we would need to suspend such aggressive gathering of CBO statistics and validate reason ROLL_INVALID_MISMATCH is reduced.

Anyways, free script used is below. Enjoy it!

*** edited *** a new version of the script is now available (below). Thanks to stewashton for his input.

-- sql_shared_cursor.sql SET HEA OFF LIN 300 NEWP NONE PAGES 0 FEED OFF ECHO OFF VER OFF TRIMS ON TRIM ON TI OFF TIMI OFF SQLBL ON BLO . RECSEP OFF; SPO all_reasons.sql SELECT CASE WHEN ROWNUM = 1 THEN '( ' ELSE ', ' END||column_name FROM dba_tab_columns WHERE table_name = 'V_$SQL_SHARED_CURSOR' AND owner = 'SYS' AND data_type = 'VARCHAR2' AND data_length = 1 / SPO OFF; GET all_reasons.sql I ) I ) I WHERE value = 'Y' I GROUP BY reason_not_shared I ORDER BY cursors DESC, sql_ids DESC, reason_not_shared 0 ( value FOR reason_not_shared IN 0 FROM v$sql_shared_cursor UNPIVOT 0 SELECT COUNT(*) cursors, COUNT(DISTINCT sql_id) sql_ids, reason_not_shared L SET HEA ON NEWP 1 PAGES 30 PRO please wait / !rm all_reasons.sql

Forcing a “Nested Loop only” Execution Plan

Sometimes you do what you have to do. So here I confess doing something I usually avoid: forcing an Execution Plan (which is not the same as using a more conventional method for Plan stability).

This is a case on 11.2.0.3.0 base release where the application vendor sets the optimizer to 9i, and tweaks other CBO parameters in questionable ways, then some queries produce suboptimal plans (as expected); and you are called to help without changing the obvious.

There is a family of queries from an ad-hoc query generator that permits users to issue queries without a set of selective predicates. These queries join several large tables and their performance is poor (as expected as well!). On top of the previous, all these queries include the /*+ FIRST_ROWS */ CBO Hint and the questionable DISTINCT keyword. Note: it is quite common for developers to throw a DISTINCT keyword “to avoid duplicates” where the mere existence of duplicates would be an indication of an application bug; so “why fix it if I can hide it, right?”.

There is one caveat although: these queries include a generic predicate “rownum <= :b1”, and value passed defaults to 5000, so users rationale is “if I only want the first X rows my query should return fast”. This highlights still another questionable practice since it is hard to imagine a user scrolling 5000 rows and making any sense of such large set, especially when the full “filtered” set would be several million rows long. So the original problem is questionable in several ways. Nevertheless, sometimes we are called to help besides providing advice. And no, we are not allowed to slap hands 😉

The good news is that we can use this extra predicate on rownum and make these queries to return the first X rows really fast; and I mean less than 5 seconds instead of over one hour or more! And if users want not 5000 but 500 or even 50 rows, then we can be in the sub-second range!

You may be thinking FIRST_ROWS optimization, and that was my first try. Unfortunately, on 11.2.0.3.0, even reversing all the suboptimal CBO parameters at the session level, I would consistently get an Execution Plan with a few Hash Joins and a large Cost; and if I were to force a Nested Loop Plan, the cost would be several orders of magnitude larger so the CBO would not pick it! Nevertheless, such a “Nest Loop only” Execution Plan would fulfill the user’s expectations, regardless the validity of the initial request. And yes, CBO statistics are OK, not perfect but simply OK. One more piece of info: this is not Exadata! (if it were Exadata most probably these same Execution Plans with full table scans and Hash Joins would simply fly!).

So, my issue became: How do I force an Execution Plan that only contains Nested Loops? If I could do that, then the COUNT STOP operation could help me to halt my SQL execution once I fetched the first X rows (Hash Join does not allow me do that). Remember: these tables have literally millions of rows. I could pepper these queries with a ton of CBO Hints and I would get my desired “Nested Loop only” Execution Plan… But that would be a lot of work and tricky at best.

SQL Patch to the rescue

I could had used a SQL Profile, but I think this dirty trick of suppressing Hash Joins and Sort Merge Joins, would be better served with a SQL Patch. I also thought Siebel: They do tweak CBO parameters as well, and they suppress Hash Joins, but they change System and Session level parameters… Since I wanted my change to be very localized, SQL Patch could provide me just what I needed.

Under the Downloads section on the margin of this page, there is a “cscripts” link that includes the sqlpch.sql script. I used this script and passed as the second parameter the following string (1st parameter is SQL_ID). With a SQL Patch generated this way, I could systematically produce a “Nested-Loops only” Execution Plan for these few queries. I did not have to change the original SQL, nor change the CBO environment at the System or Session level, neither restrict the query generator, and I did not had to “educate” the users to avoid such unbounded queries.

OPT_PARAM("_optimizer_sortmerge_join_enabled" "FALSE") OPT_PARAM("_hash_join_enabled" "FALSE")

Conclusion

I have to concede doing something questionable, in this case using a SQL Patch to force a desired Execution Plan instead of fixing the obvious, simply because that was the shortest path to alleviate the user’s pain.

I consider this technique above a temporary work-around and not a solution to the actual issue. In this case the right way to handle this issue would be:

- Have the application vendor certify their application to the latest release of the database and reset all CBO related parameters, plus

- Have the application vendor remove CBO Hints and DISTINCT keyword from queries, plus

- Configure the ad-hoc query generator to restrict users from executing queries without selective predicates, then

- Tune those outlier queries that may still need some work to perform as per business requirements, and possibly

- Educate the users to provide as many selective predicates as possible

Anyways, the potential of using a SQL Patch to tweak an Execution Plan in mysterious ways is quite powerful, and something we may want to keep in the back of our minds for a rainy day…

Discovering if a System level Parameter has changed its value (and when it happened)

Quite often I learn of a system where “nobody changed anything” and suddenly the system is experiencing some strange behavior. Then after diligent investigation it turns out someone changed a little parameter at the System level, but somehow disregarded mentioning it since he/she thought it had no connection to the unexpected behavior. As we all know, System parameters are big knobs that we don’t change lightly, still we often see “unknown” changes like the one described.

Script below produces a list of changes to System parameter values, indicating when a parameter was changed and from which value into which value. It does not filter out cache re-sizing operations, or resource manager plan changes. Both would be easy to exclude, but I’d rather see those global changes listed as well.

Note: This script below should only be executed if your site has a license for the Oracle Diagnostics pack (or Tuning pack), since it reads from AWR.

WITH

all_parameters AS (

SELECT snap_id,

dbid,

instance_number,

parameter_name,

value,

isdefault,

ismodified,

lag(value) OVER (PARTITION BY dbid, instance_number, parameter_hash ORDER BY snap_id) prior_value

FROM dba_hist_parameter

)

SELECT TO_CHAR(s.begin_interval_time, 'YYYY-MM-DD HH24:MI') begin_time,

TO_CHAR(s.end_interval_time, 'YYYY-MM-DD HH24:MI') end_time,

p.snap_id,

p.dbid,

p.instance_number,

p.parameter_name,

p.value,

p.isdefault,

p.ismodified,

p.prior_value

FROM all_parameters p,

dba_hist_snapshot s

WHERE p.value != p.prior_value

AND s.snap_id = p.snap_id

AND s.dbid = p.dbid

AND s.instance_number = p.instance_number

ORDER BY

s.begin_interval_time DESC,

p.dbid,

p.instance_number,

p.parameter_name

/

Sample output follows, where we can see a parameter affecting Degree of Parallelism was changed. This is just to illustrate its use. Enjoy this new free script! It is now part of edb360.

Creating a SQL Plan Baseline out of a modified SQL

While delivering a session at KSCOPE 14, I was asked a very good question: Can I create a SQL Plan Baseline for an Original SQL out of a Modified SQL? In other words, query Q1, which performs poorly, has SQL_ID1 and SQL_HANDLE1 with a poorly performing Execution Plan with Hash Value PHV1. With a small modification to this query, like adding a CBO Hint or removing one, we obtain query Q2, which performs well, and has SQL_ID2, SQL_HANDLE2 and PHV2. So what we want it to associate PHV2 to SQL_ID1. The questions is: how do we do that with an API?

Maria Colgan explains on this blog how to manually do exactly what is requested above. So the question becomes: can we do this manual procedure with an easy to use API?

Script coe_load_sql_baseline.sql below inputs SQL_ID1, SQL_ID2 and PHV2, which correspond to the Original SQL_ID, the Modified SQL_ID and the Plan Hash Value from the Modified SQL (it could have more than one plan); then it produces a SQL Plan Baseline for the Original SQL out of the Execution Plan of the Modified SQL. By doing this we can “fix” a Query performing poorly by attaching to it a good performing Execution Plan that was produced by making a small temporary change to the Original SQL, like adding or removing a small set of CBO Hints. So we can generate good Execution Plans without actually changing the application.

Script coe_load_sql_baseline.sql is provided inside SQLT (MOS 215187.1) under sqlt/utl directory. Use always the latest version. As of June 2014 the latest version is the one below. This script does not require to install SQLT.

SPO coe_load_sql_baseline.log;

SET DEF ON TERM OFF ECHO ON FEED OFF VER OFF HEA ON LIN 2000 PAGES 100 LONG 8000000 LONGC 800000 TRIMS ON TI OFF TIMI OFF SERVEROUT ON SIZE 1000000 NUM 20 SQLP SQL>;

SET SERVEROUT ON SIZE UNL;

REM

REM $Header: 215187.1 coe_load_sql_baseline.sql 11.4.5.8 2013/05/10 carlos.sierra $

REM

REM Copyright (c) 2000-2013, Oracle Corporation. All rights reserved.

REM

REM AUTHOR

REM carlos.sierra@oracle.com

REM

REM SCRIPT

REM coe_load_sql_baseline.sql

REM

REM DESCRIPTION

REM This script loads a plan from a modified SQL into the SQL

REM Plan Baseline of the original SQL.

REM If a good performing plan only reproduces with CBO Hints

REM then you can load the plan of the modified version of the

REM SQL into the SQL Plan Baseline of the orignal SQL.

REM In other words, the original SQL can use the plan that was

REM generated out of the SQL with hints.

REM

REM PRE-REQUISITES

REM 1. Have in cache or AWR the text for the original SQL.

REM 2. Have in cache the plan for the modified SQL

REM (usually with hints).

REM

REM PARAMETERS

REM 1. ORIGINAL_SQL_ID (required)

REM 2. MODIFIED_SQL_ID (required)

REM 3. PLAN_HASH_VALUE (required)

REM

REM EXECUTION

REM 1. Connect into SQL*Plus as user with access to data dictionary

REM and privileges to create SQL Plan Baselines. Do not use SYS.

REM 2. Execute script coe_load_sql_baseline.sql passing first two

REM parameters inline or until requested by script.

REM 3. Provide plan hash value of the modified SQL when asked.

REM

REM EXAMPLE

REM # sqlplus system

REM SQL> START coe_load_sql_baseline.sql gnjy0mn4y9pbm b8f3mbkd8bkgh

REM SQL> START coe_load_sql_baseline.sql;

REM

REM NOTES

REM 1. This script works on 11g or higher.

REM 2. For a similar script for 10g use coe_load_sql_profile.sql,

REM which uses custom SQL Profiles instead of SQL Baselines.

REM 3. For possible errors see coe_load_sql_baseline.log

REM 4. Use a DBA user but not SYS. Do not connect as SYS as the staging

REM table cannot be created in SYS schema and you will receive an error:

REM ORA-19381: cannot create staging table in SYS schema

REM

SET TERM ON ECHO OFF;

PRO

PRO Parameter 1:

PRO ORIGINAL_SQL_ID (required)

PRO

DEF original_sql_id = '&1';

PRO

PRO Parameter 2:

PRO MODIFIED_SQL_ID (required)

PRO

DEF modified_sql_id = '&2';

PRO

WITH

p AS (

SELECT DISTINCT plan_hash_value

FROM gv$sql_plan

WHERE sql_id = TRIM('&&modified_sql_id.')

AND other_xml IS NOT NULL ),

m AS (

SELECT plan_hash_value,

SUM(elapsed_time)/SUM(executions) avg_et_secs

FROM gv$sql

WHERE sql_id = TRIM('&&modified_sql_id.')

AND executions > 0

GROUP BY

plan_hash_value )

SELECT p.plan_hash_value,

ROUND(m.avg_et_secs/1e6, 3) avg_et_secs

FROM p, m

WHERE p.plan_hash_value = m.plan_hash_value

ORDER BY

avg_et_secs NULLS LAST;

PRO

PRO Parameter 3:

PRO PLAN_HASH_VALUE (required)

PRO

DEF plan_hash_value = '&3';

PRO

PRO Values passed to coe_load_sql_baseline:

PRO ~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

PRO ORIGINAL_SQL_ID: "&&original_sql_id."

PRO MODIFIED_SQL_ID: "&&modified_sql_id."

PRO PLAN_HASH_VALUE: "&&plan_hash_value."

PRO

WHENEVER SQLERROR EXIT SQL.SQLCODE;

SET TERM OFF ECHO ON;

-- trim parameters

COL original_sql_id NEW_V original_sql_id FOR A30;

COL modified_sql_id NEW_V modified_sql_id FOR A30;

COL plan_hash_value NEW_V plan_hash_value FOR A30;

SELECT TRIM('&&original_sql_id.') original_sql_id, TRIM('&&modified_sql_id.') modified_sql_id, TRIM('&&plan_hash_value.') plan_hash_value FROM DUAL;

-- open log file

SPO coe_load_sql_baseline_&&original_sql_id..log;

GET coe_load_sql_baseline.log;

.

-- get user

COL connected_user NEW_V connected_user FOR A30;

SELECT USER connected_user FROM DUAL;

VAR sql_text CLOB;

VAR plan_name VARCHAR2(30);

EXEC :sql_text := NULL;

EXEC :plan_name := NULL;

-- get sql_text from memory

BEGIN

SELECT REPLACE(sql_fulltext, CHR(00), ' ')

INTO :sql_text

FROM gv$sqlarea

WHERE sql_id = TRIM('&&original_sql_id.')

AND ROWNUM = 1;

EXCEPTION

WHEN OTHERS THEN

DBMS_OUTPUT.PUT_LINE('getting original sql_text from memory: '||SQLERRM);

:sql_text := NULL;

END;

/

-- get sql_text from awr

BEGIN

IF :sql_text IS NULL OR NVL(DBMS_LOB.GETLENGTH(:sql_text), 0) = 0 THEN

SELECT REPLACE(sql_text, CHR(00), ' ')

INTO :sql_text

FROM dba_hist_sqltext

WHERE sql_id = TRIM('&&original_sql_id.')

AND sql_text IS NOT NULL

AND ROWNUM = 1;

END IF;

EXCEPTION

WHEN OTHERS THEN

DBMS_OUTPUT.PUT_LINE('getting original sql_text from awr: '||SQLERRM);

:sql_text := NULL;

END;

/

-- sql_text as found

SELECT :sql_text FROM DUAL;

-- check is sql_text for original sql is available

SET TERM ON;

BEGIN

IF :sql_text IS NULL THEN

RAISE_APPLICATION_ERROR(-20100, 'SQL_TEXT for original SQL_ID &&original_sql_id. was not found in memory (gv$sqlarea) or AWR (dba_hist_sqltext).');

END IF;

END;

/

-- check phv is found

DECLARE

l_count NUMBER;

BEGIN

SELECT COUNT(*)

INTO l_count

FROM gv$sql

WHERE sql_id = TRIM('&&modified_sql_id.')

AND plan_hash_value = TO_NUMBER(TRIM('&&plan_hash_value.'));

IF l_count = 0 THEN

RAISE_APPLICATION_ERROR(-20110, 'PHV &&plan_hash_value. for modified SQL_ID &&modified_sql_id. was not be found in memory (gv$sql).');

END IF;

END;

/

SET ECHO OFF;

DECLARE

plans NUMBER;

description VARCHAR2(500);

sys_sql_handle VARCHAR2(30);

sys_plan_name VARCHAR2(30);

BEGIN

-- create sql_plan_baseline for original sql using plan from modified sql

plans :=

DBMS_SPM.LOAD_PLANS_FROM_CURSOR_CACHE (

sql_id => TRIM('&&modified_sql_id.'),

plan_hash_value => TO_NUMBER(TRIM('&&plan_hash_value.')),

sql_text => :sql_text );

DBMS_OUTPUT.PUT_LINE('Plans Loaded: '||plans);

-- find handle and plan_name for sql_plan_baseline just created

SELECT sql_handle, plan_name

INTO sys_sql_handle, sys_plan_name

FROM dba_sql_plan_baselines

WHERE creator = USER

AND origin = 'MANUAL-LOAD'

AND created = ( -- past 1 minute only

SELECT MAX(created) max_created

FROM dba_sql_plan_baselines

WHERE creator = USER

AND origin = 'MANUAL-LOAD'

AND created > SYSDATE - (1/24/60));

DBMS_OUTPUT.PUT_LINE('sys_sql_handle: "'||sys_sql_handle||'"');

DBMS_OUTPUT.PUT_LINE('sys_plan_name: "'||sys_plan_name||'"');

-- update description of new sql_plan_baseline

description := UPPER('original:'||TRIM('&&original_sql_id.')||' modified:'||TRIM('&&modified_sql_id.')||' phv:'||TRIM('&&plan_hash_value.')||' created by coe_load_sql_baseline.sql');

plans :=

DBMS_SPM.ALTER_SQL_PLAN_BASELINE (

sql_handle => sys_sql_handle,

plan_name => sys_plan_name,

attribute_name => 'description',

attribute_value => description );

DBMS_OUTPUT.PUT_LINE(plans||' plan(s) modified description: "'||description||'"');

-- update plan_name of new sql_plan_baseline

:plan_name := UPPER(TRIM('&&original_sql_id.')||'_'||TRIM('&&modified_sql_id.'));

:plan_name := sys_plan_name; -- avoids ORA-38141: SQL plan baseline SQL_PLAN_64b0jqr2t1h3558b5ab4d does not exist

IF :plan_name <> sys_plan_name THEN

plans :=

DBMS_SPM.ALTER_SQL_PLAN_BASELINE (

sql_handle => sys_sql_handle,

plan_name => sys_plan_name,

attribute_name => 'plan_name',

attribute_value => :plan_name );

DBMS_OUTPUT.PUT_LINE(plans||' plan(s) modified plan_name: "'||:plan_name||'"');

END IF;

-- drop baseline staging table for original sql (if one exists)

BEGIN

DBMS_OUTPUT.PUT_LINE('dropping staging table "STGTAB_BASELINE_'||UPPER(TRIM('&&original_sql_id.'))||'"');

EXECUTE IMMEDIATE 'DROP TABLE STGTAB_BASELINE_'||UPPER(TRIM('&&original_sql_id.'));

EXCEPTION

WHEN OTHERS THEN

DBMS_OUTPUT.PUT_LINE('staging table "STGTAB_BASELINE_'||UPPER(TRIM('&&original_sql_id.'))||'" did not exist');

END;

-- create baseline staging table for original sql

DBMS_OUTPUT.PUT_LINE('creating staging table "STGTAB_BASELINE_'||UPPER(TRIM('&&original_sql_id.'))||'"');

DBMS_SPM.CREATE_STGTAB_BASELINE (

table_name => 'STGTAB_BASELINE_'||UPPER(TRIM('&&original_sql_id.')),

table_owner => '&&connected_user.' );

-- packs new baseline for original sql

DBMS_OUTPUT.PUT_LINE('packaging new sql baseline into staging table "STGTAB_BASELINE_'||UPPER(TRIM('&&original_sql_id.'))||'"');

plans :=

DBMS_SPM.PACK_STGTAB_BASELINE (

table_name => 'STGTAB_BASELINE_'||UPPER(TRIM('&&original_sql_id.')),

table_owner => '&&connected_user.',

sql_handle => sys_sql_handle,

plan_name => :plan_name );

DBMS_OUTPUT.PUT_LINE(plans||' pla(s) packaged');

END;

/

-- display details of new sql_plan_baseline

SET ECHO ON;

REM

REM SQL Plan Baseline

REM ~~~~~~~~~~~~~~~~~

REM

SELECT signature, sql_handle, plan_name, enabled, accepted, fixed--, reproduced (avail on 11.2.0.2)

FROM dba_sql_plan_baselines WHERE plan_name = :plan_name;

SELECT description

FROM dba_sql_plan_baselines WHERE plan_name = :plan_name;

SET ECHO OFF;

PRO

PRO ****************************************************************************

PRO * Enter &&connected_user. password to export staging table STGTAB_BASELINE_&&original_sql_id.

PRO ****************************************************************************

HOS exp &&connected_user. tables=&&connected_user..STGTAB_BASELINE_&&original_sql_id. file=STGTAB_BASELINE_&&original_sql_id..dmp statistics=NONE indexes=N constraints=N grants=N triggers=N

PRO

PRO If you need to implement this SQL Plan Baseline on a similar system,

PRO import and unpack using these commands:

PRO

PRO imp &&connected_user. file=STGTAB_BASELINE_&&original_sql_id..dmp tables=STGTAB_BASELINE_&&original_sql_id. ignore=Y

PRO

PRO SET SERVEROUT ON;;

PRO DECLARE

PRO plans NUMBER;;

PRO BEGIN

PRO plans := DBMS_SPM.UNPACK_STGTAB_BASELINE('STGTAB_BASELINE_&&original_sql_id.', '&&connected_user.');;

PRO DBMS_OUTPUT.PUT_LINE(plans||' plan(s) unpackaged');;

PRO END;;

PRO /

PRO

SPO OFF;

HOS zip -m coe_load_sql_baseline_&&original_sql_id. coe_load_sql_baseline_&&original_sql_id..log STGTAB_BASELINE_&&original_sql_id..dmp coe_load_sql_baseline.log

HOS zip -d coe_load_sql_baseline_&&original_sql_id. coe_load_sql_baseline.log

WHENEVER SQLERROR CONTINUE;

SET DEF ON TERM ON ECHO OFF FEED 6 VER ON HEA ON LIN 80 PAGES 14 LONG 80 LONGC 80 TRIMS OFF TI OFF TIMI OFF SERVEROUT OFF NUM 10 SQLP SQL>;

SET SERVEROUT OFF;

UNDEFINE 1 2 3 original_sql_id modified_sql_id plan_hash_value

CL COL

PRO

PRO coe_load_sql_baseline completed.

Skipping ACS ramp-up using a SQL Patch

As I prepare for one of my sessions at ODTUG Kscope14 I came across the typical situation of having a SQL for which I wanted to produce multiple optimal execution Plans on an 11g environment. As you may know, with Adaptive Cursor Sharing (ACS) this is possible and automatic, but the problem is that sometimes the ACS ramp-up process causes some suboptimal Execution Plans. If you want to skip this ACS ramp-up process, lets say for a SQL that is part of a business-critical transaction and which is known to have unstable Plans, then you may want to create a SQL Patch with the BIND_AWARE Hint. Maria Colgan explained this method on this blog post. What I present here is a script I use, so I can easily implement SQL Patches for some SQL where I just need to inject one or two CBO Hints, like this BIND_AWARE. I use SQL Profiles or SQL Plan Management when I need to provide CBO Hints that affect access paths or join order, but if I just need something like skipping ACS ramp-up or a Hint to produce a SQL Monitor report, then I’d rather use SQL Patch.

Script below asks for SQL_ID and for a short list of CBO Hints to include. By default it includes these 3: “GATHER_PLAN_STATISTICS MONITOR BIND_AWARE”. Execute this script connecting as SYS.

----------------------------------------------------------------------------------------

--

-- File name: sqlpch.sql

--

-- Purpose: Create Diagnostics SQL Patch for one SQL_ID

--

-- Author: Carlos Sierra

--

-- Version: 2013/12/28

--

-- Usage: This script inputs two parameters. Parameter 1 the SQL_ID and Parameter 2

-- the set of Hints for the SQL Patch (default to GATHER_PLAN_STATISTICS

-- MONITOR BIND_AWARE).

--

-- Example: @sqlpch.sql f995z9antmhxn BIND_AWARE

--

-- Notes: Developed and tested on 11.2.0.3 and 12.0.1.0

--

---------------------------------------------------------------------------------------

SPO sqlpch.txt;

DEF def_hint_text = 'GATHER_PLAN_STATISTICS MONITOR BIND_AWARE';

SET DEF ON TERM OFF ECHO ON FEED OFF VER OFF HEA ON LIN 2000 PAGES 100 LONG 8000000 LONGC 800000 TRIMS ON TI OFF TIMI OFF SERVEROUT ON SIZE 1000000 NUMF "" SQLP SQL>;

SET SERVEROUT ON SIZE UNL;

COL hint_text NEW_V hint_text FOR A300;

SET TERM ON ECHO OFF;

PRO

PRO Parameter 1:

PRO SQL_ID (required)

PRO

DEF sql_id_1 = '&1';

PRO

PRO Parameter 2:

PRO HINT_TEXT (default: &&def_hint_text.)

PRO

DEF hint_text_2 = '&2';

PRO

PRO Values passed:

PRO ~~~~~~~~~~~~~

PRO SQL_ID : "&&sql_id_1."

PRO HINT_TEXT: "&&hint_text_2." (default: "&&def_hint_text.")

PRO

SET TERM OFF ECHO ON;

SELECT TRIM(NVL(REPLACE('&&hint_text_2.', '"', ''''''), '&&def_hint_text.')) hint_text FROM dual;

WHENEVER SQLERROR EXIT SQL.SQLCODE;

-- trim sql_id parameter

COL sql_id NEW_V sql_id FOR A30;

SELECT TRIM('&&sql_id_1.') sql_id FROM DUAL;

VAR sql_text CLOB;

VAR sql_text2 CLOB;

EXEC :sql_text := NULL;

EXEC :sql_text2 := NULL;

-- get sql_text from memory

DECLARE

l_sql_text VARCHAR2(32767);

BEGIN -- 10g see bug 5017909

FOR i IN (SELECT DISTINCT piece, sql_text

FROM gv$sqltext_with_newlines

WHERE sql_id = TRIM('&&sql_id.')

ORDER BY 1, 2)

LOOP

IF :sql_text IS NULL THEN

DBMS_LOB.CREATETEMPORARY(:sql_text, TRUE);

DBMS_LOB.OPEN(:sql_text, DBMS_LOB.LOB_READWRITE);

END IF;

l_sql_text := REPLACE(i.sql_text, CHR(00), ' '); -- removes NUL characters

DBMS_LOB.WRITEAPPEND(:sql_text, LENGTH(l_sql_text), l_sql_text);

END LOOP;

-- if found in memory then sql_text is not null

IF :sql_text IS NOT NULL THEN

DBMS_LOB.CLOSE(:sql_text);

END IF;

EXCEPTION

WHEN OTHERS THEN

DBMS_OUTPUT.PUT_LINE('getting sql_text from memory: '||SQLERRM);

:sql_text := NULL;

END;

/

SELECT :sql_text FROM DUAL;

-- get sql_text from awr

DECLARE

l_sql_text VARCHAR2(32767);

l_clob_size NUMBER;

l_offset NUMBER;

BEGIN

IF :sql_text IS NULL OR NVL(DBMS_LOB.GETLENGTH(:sql_text), 0) = 0 THEN

SELECT sql_text

INTO :sql_text2

FROM dba_hist_sqltext

WHERE sql_id = TRIM('&&sql_id.')

AND sql_text IS NOT NULL

AND ROWNUM = 1;

END IF;

-- if found in awr then sql_text2 is not null

IF :sql_text2 IS NOT NULL THEN

l_clob_size := NVL(DBMS_LOB.GETLENGTH(:sql_text2), 0);

l_offset := 1;

DBMS_LOB.CREATETEMPORARY(:sql_text, TRUE);

DBMS_LOB.OPEN(:sql_text, DBMS_LOB.LOB_READWRITE);

-- store in clob as 64 character pieces

WHILE l_offset < l_clob_size

LOOP

IF l_clob_size - l_offset > 64 THEN

l_sql_text := REPLACE(DBMS_LOB.SUBSTR(:sql_text2, 64, l_offset), CHR(00), ' ');

ELSE -- last piece

l_sql_text := REPLACE(DBMS_LOB.SUBSTR(:sql_text2, l_clob_size - l_offset + 1, l_offset), CHR(00), ' ');

END IF;

DBMS_LOB.WRITEAPPEND(:sql_text, LENGTH(l_sql_text), l_sql_text);

l_offset := l_offset + 64;

END LOOP;

DBMS_LOB.CLOSE(:sql_text);

END IF;

EXCEPTION

WHEN OTHERS THEN

DBMS_OUTPUT.PUT_LINE('getting sql_text from awr: '||SQLERRM);

:sql_text := NULL;

END;

/

SELECT :sql_text2 FROM DUAL;

SELECT :sql_text FROM DUAL;

-- validate sql_text

BEGIN

IF :sql_text IS NULL THEN

RAISE_APPLICATION_ERROR(-20100, 'SQL_TEXT for SQL_ID &&sql_id. was not found in memory (gv$sqltext_with_newlines) or AWR (dba_hist_sqltext).');

END IF;

END;

/

PRO generate SQL Patch for SQL "&&sql_id." with CBO Hints "&&hint_text."

SELECT loaded_versions, invalidations, address, hash_value

FROM v$sqlarea WHERE sql_id = '&&sql_id.' ORDER BY 1;

SELECT child_number, plan_hash_value, executions, is_shareable

FROM v$sql WHERE sql_id = '&&sql_id.' ORDER BY 1, 2;

-- drop prior SQL Patch

WHENEVER SQLERROR CONTINUE;

PRO ignore errors

EXEC DBMS_SQLDIAG.DROP_SQL_PATCH(name => 'sqlpch_&&sql_id.');

WHENEVER SQLERROR EXIT SQL.SQLCODE;

-- create SQL Patch

PRO you have to connect as SYS

BEGIN

SYS.DBMS_SQLDIAG_INTERNAL.I_CREATE_PATCH (

sql_text => :sql_text,

hint_text => '&&hint_text.',

name => 'sqlpch_&&sql_id.',

category => 'DEFAULT',

description => '/*+ &&hint_text. */'

);

END;

/

-- flush cursor from shared_pool

PRO *** before flush ***

SELECT inst_id, loaded_versions, invalidations, address, hash_value

FROM gv$sqlarea WHERE sql_id = '&&sql_id.' ORDER BY 1;

SELECT inst_id, child_number, plan_hash_value, executions, is_shareable

FROM gv$sql WHERE sql_id = '&&sql_id.' ORDER BY 1, 2;

PRO *** flushing &&sql_id. ***

BEGIN

FOR i IN (SELECT address, hash_value

FROM gv$sqlarea WHERE sql_id = '&&sql_id.')

LOOP

DBMS_OUTPUT.PUT_LINE(i.address||','||i.hash_value);

BEGIN

SYS.DBMS_SHARED_POOL.PURGE (

name => i.address||','||i.hash_value,

flag => 'C'

);

EXCEPTION

WHEN OTHERS THEN

DBMS_OUTPUT.PUT_LINE(SQLERRM);

END;

END LOOP;

END;

/

PRO *** after flush ***

SELECT inst_id, loaded_versions, invalidations, address, hash_value

FROM gv$sqlarea WHERE sql_id = '&&sql_id.' ORDER BY 1;

SELECT inst_id, child_number, plan_hash_value, executions, is_shareable

FROM gv$sql WHERE sql_id = '&&sql_id.' ORDER BY 1, 2;

WHENEVER SQLERROR CONTINUE;

SET DEF ON TERM ON ECHO OFF FEED 6 VER ON HEA ON LIN 80 PAGES 14 LONG 80 LONGC 80 TRIMS OFF TI OFF TIMI OFF SERVEROUT OFF NUMF "" SQLP SQL>;

SET SERVEROUT OFF;

PRO

PRO SQL Patch "sqlpch_&&sql_id." will be used on next parse.

PRO To drop SQL Patch on this SQL:

PRO EXEC DBMS_SQLDIAG.DROP_SQL_PATCH(name => 'sqlpch_&&sql_id.');

PRO

UNDEFINE 1 2 sql_id_1 sql_id hint_text_2 hint_text

CL COL

PRO

PRO sqlpch completed.

SPO OFF;