Archive for the ‘PDB’ Category

Scripts to deal with SQL Plan Baselines, SQL Profiles and SQL Patches

To mitigate SQL performance issues, I do make use of SQL Plan Baselines, SQL Profiles and SQL Patches, on a daily basis. Our environments are single-instance 12.1.0.2 CDBs, with over 2,000 PDBs. Our goal is Execution Plan Stability and consistent performance, over CBO plan flexibility. The CBO does a good job, considering the complexity imposed by current applications design. Nevertheless, some SQL require some help in order to enhance their plan stability.

I have written and shared a set of scripts that simply make the use of a bunch of APIs a lot easier, with better documented actions, and fully consistent within the organization. I have shared with the community these scripts in the past, and I keep them updated as per needs change. All these “CS” scripts are available under the download section on the right column.

Current version of the CS scripts is more like a toolset. You treat them as a whole. All of them call some other script that exists within the cs_internal subdirectory, then I usually navigate to the parent sql directory, and connect into SQL*Plus from there. All these scripts can be easily cloned and/or customized to your specific needs. They are available as “free to use” and “as is”. There is no requirement to keep their heading intact, so you can reverse-engineer them and make them your own if you want. Just keep in mind that I maintain, enhance, and extend this CS toolset every single day; so what you get today is a subset of what you will get tomorrow. If you think an enhancement you need (or a fix) is beneficial to the larger community (and to you), please let me know.

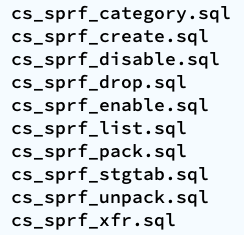

SQL Plan Baselines scripts

With the set of SQL Plan Baselines scripts, you can: 1) create a baseline based on a cursor or a plan stored into AWR; 2) enable and disable baselines; 3) drop baselines; 4) store them into a local staging table; 5) restore them from their local staging table; 6) promote as “fixed” or demote from “fixed”; 7) “zap” them if you have installed “El Zapper” (iod_spm).

Note: “El Zapper” is a PL/SQL package that extends the functionality of SQL Plan Management by automagically creating SQL Plan Baselines based on proven performance of a SQL statement over time, while considering a large number of executions, and a variety of historical plans. Please do not confuse “El Zapper” with auto-evolve of SPM. They are based on two very distinct premises. “El Zapper” also monitors the performance of active SQL Plan Baselines, and during an observation window it may disable a SQL Plan Baseline, if such plan no longer performs as “promised” (according to some thresholds). Most applications do not need “El Zapper”, since the use of SQL Plan Management should be more of an exception than a rule.

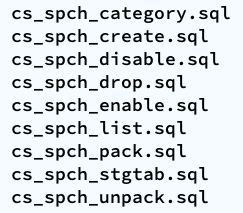

SQL Profiles scripts

With the set of SQL Profiles scripts, you can: 1) create a profile based on the outline of a cursor, or from a plan stored into AWR; 2) enable and disable profiles; 3) drop profiles; 4) store them into a local staging table; 5) restore them from their local staging table; 6) transfer them from one location to another (very similar to coe_xfr_sql_profile.sql, but on a more modular way).

Note: Regarding the transfer of a SQL Profile, the concept is simple: 1) on source location generate two plain text scripts, one that contains the SQL text, and a second that includes the Execution Plan (outline); 2) execute these two scripts on a target location, in order to create a SQL Profile there. The beauty of this method is not only that you can easily move Execution Plans between locations, but that you can actually create a SQL Profile getting the SQL Text from SQL_ID “A”, and the Execution Plan from SQL_ID “B”, allowing you to do things like: removing CBO Hints, or using a plan from a similar SQL but not quite the same (e.g. I can tweak a stand-alone cloned version of a SQL statement, and once I get the plan that I need, I associate the SQL Text from the original SQL, with the desired Execution Plan out of the stand-alone customized version of the SQL, after that I create a SQL Plan Baseline and drop the staging SQL Profile).

SQL Patches scripts

With the set of SQL Patches scripts, you can: 1) create a SQL patch based on one or more CBO Hints you provide (e.g.: GATHER_PLAN_STATISTICS MONITOR FIRST_ROWS(1) OPT_PARAM(‘_fix_control’ ‘5922070:OFF’) NO_BIND_AWARE); 2) enable and disable SQL patches; 3) drop SQL patches; 4) store them into a local staging table; 5) restore them from their local staging table.

Note: I use SQL Patches a lot, specially to embed CBO Hints that generate some desirable diagnostics details (and not so much to change plans), such as the ones provided by GATHER_PLAN_STATISTICS and MONITOR. In some cases, after I use the pathfinder tool written by Mauro Pagano, I have to disable a CBO patch (funny thing: I use a SQL Patch to disable a CBO Patch!). I also use a SQL Patch if I need to enable Adaptive Cursor Sharing (ACS) for one SQL (we disabled ACS for one major application). Bear in mind that SQL Plan Baselines, SQL Profiles and SQL Patches happily co-exist, so you can use them together, but I do prefer to use SQL Plan Baselines alone, whenever possible.

Adapting and adopting SQL Plan Management (SPM)

Introduction

This post is about: “Adapting and adopting SQL Plan Management (SPM) to achieve execution plan stability for sub-second queries on a high-rate OLTP mission-critical application”. In our case, such an application is implemented on top of several Oracle 12c multi tenant databases, where a consistent average execution time is more valuable than flexible execution plans. We successfully achieved plan stability implementing a simple algorithm using PL/SQL calling DBMS_SPM public APIs.

Chart below depicts a typical case where the average performance of a large set of business-critical SQL statements suddenly degraded from sub-millisecond to 15 or 20ms, then beccome more stable around 3ms. Wide spikes are a typical trademark of an Execution Plan for one or more SQL statements flipping for some time. In order to produce a more consistent latency we needed to improve plan stability, and of course the preferred tool to achieve that on an Oracle database is SQL Plan Management.

Algorithm

We tested and ruled out adaptive SQL Plan Management, which is an excellent 12c new feature. But, due to the dynamics of this application, where transactional data shifts so fast, allowing this “adaptive SPM” feature to evaluate auto-captured plans using bind variable values captured a few hours earlier, rendered unfortunately false positives. These false positives “evolved” as execution plans that were numerically optimal for values captured (at the time the candidate plan was captured), but performed poorly when executed on “current” values a few hours later. Nevertheless, this 12c “adaptive SPM” new feature is worth exploring for other applications.

We adapted SPM so it would only generate SQL Plan Baselines on SQL that executes often, and that is critical for the business. The algorithm has some complexity such as candidate evaluation and SQL categorization; and besides SPB creation it also includes plan demotion and plan promotion. We have successfully implemented it in some PDBs and we are currently doing a rollout to entire CDBs. The algorithm is depicted on diagram on the left, and more details are included in corresponding presentation slides listed on the right-hand bar. I plan to talk about this topic on an international Oracle Users Group in 2018.

We adapted SPM so it would only generate SQL Plan Baselines on SQL that executes often, and that is critical for the business. The algorithm has some complexity such as candidate evaluation and SQL categorization; and besides SPB creation it also includes plan demotion and plan promotion. We have successfully implemented it in some PDBs and we are currently doing a rollout to entire CDBs. The algorithm is depicted on diagram on the left, and more details are included in corresponding presentation slides listed on the right-hand bar. I plan to talk about this topic on an international Oracle Users Group in 2018.

This algorithm is scripted into a sample PL/SQL package, which you can find on a subdirectory on my shared scripts. If you consider using this sample script for an application of your own, be sure you make it yours before attempting to use it. In other words: fully understand it first, then proceed to customize it accordingly and test it thoroughly.

Results

Chart below shows how average performance of business-critical SQL became more stable after implementing algorithm to adapt and adopt SPM on a pilot PDB. Not all went fine although: we had some outliers that required some tuning to the algorithm. Among challenges we faced: volatile data (creating a SPB when table was almost empty, then using it when table was larger); skewed values (create a SPB for non-popular value, then using it on a popular value); proper use of multiple optimal plans due to Adaptive Cursor Sharing (ACS); rejected candidates due to conservative initial restrictions on algorithm (performance per execution, number of executions, age of cursor, etc.)

Conclusion

If your OLTP application contains business critical SQL that executes at a high-rate, and where a spike on latency risks affecting SLAs, you may want to consider implementing SQL Plan Management. Consider then both: “adaptive SPM” if it satisfies your requirements, else build a PL/SQL library that can implement more complex logic for candidates evaluation and for SPBs maintenance. I do believe SPM works great, specially when you enhance its out-of-the-box functionality to satisfy your specific needs.

One Maintenance Window for all PDBs… Really?

Using the default Maintenance Window for automatic tasks such as statistics gathering and space advisor is just fine… except when the number of PDBs is large (in our case over 100 PDBs in one DB). And starting resource-intensive tasks regulated by the Maintenance Window cause CPU to spike when all PDBs start their weekday window at 10PM…

Why should I care that my CPU spikes at 100% when all 100+ PDBs start their Maintenance Window at 10PM? At that time I should be sleeping, right? Well, if your shop is a quasi 24×7, then such spikes can be noticeable to your users, and possibly affect SLAs.

The solution is to stagger the start time of the Maintenance Window for all your PDBs. For example, start one PDB at 10 PM and another at 10:30 and so on. If you want to start them all within a 4 hours interval, and if you have 100 PDBs, that means you can start 25 per hour. You may also want to reduce the window duration for each PDB from default of 4 hours to let’s say 1 hour. So you could start all within 4 hours, and have the last one close its window one hour later, thus the total time for all Maintenance Windows could be 5 hours, and you would had flattened the CPU spike.

In our case, since we are a 24×7 shop, and since in some cases one of the Tasks executed during the Maintenance Window invalidates a cursor that renders a new Execution Plan (waking at times the on-call DBA), then we decided to start our Maintenance Windows at 8 AM PST (15:00 UTC), and stagger all PDBs to start their window within 4 hours (between 15 and 19 UTC), then have each a duration of 1hr maximum. On weekends we can start at the same time, but allow all PDBs to start their window within 5 hours and with a maximum duration of 2hr. So on weekends we can open the first Maintenance Window at 8 AM PST, and the last one at 1 PM, while allowing each PDB to take up to 2hrs. This is not the ideal solution, but it is much better than opening the gate for 100+ PDBs all at the same time.

The fun part is how to apply such change to all databases in all regions, where basically on each server the databases on it would have a Maintenance Window that starts at different times for each of the PDBs. We ended up with script below, which takes some variables to specify start time, lapse of time where PDBs can start their Maintenance Window, and duration of it per PDB. This script is written in such a way that it can be executed using OEM targeting all databases. Feel free to use it in case you are dealing with PDBs and see CPU spikes caused by Maintenance Window and such spikes affect user experience.

</pre>

----------------------------------------------------------------------------------------

--

-- File name: pdb_windows.sql

--

-- Purpose: Sets staggered maintenance windows for all PDBs in order to avoid CPU

-- spikes caused by all PDBs starting their maintenance window at the same

-- time

--

-- Author: Carlos Sierra

--

-- Version: 2017/07/12

--

-- Usage: Execute as SYS on CDB

--

-- Example: @pdb_windows.sql

--

-- Notes:

-- Stagger PDBs maintenance windows to start at different times in order to

-- reduce CPU spikes, for example: all PDBs start their maintenance window

-- between 8AM PST and 10AM PST (2 hours), and each window lasts 4 hour,

-- so all maintenance tasks are executed between 8AM and 2PM PST.

-- Notice that in such example, 1st window opens at 8AM and last one opens

-- at 10AM. First closes at 12NOON and last at 2PM

-- Be aware that default cursor invalidation may happen a few hours (3 or 4)

-- after a maintenance window closes. Then, since most PDBs process their

-- tasks fast, actual cursor invalidation may happen between 11AM and 1PM.

--

---------------------------------------------------------------------------------------

SET LINES 300 SERVEROUTPUT ON

COL report_date NEW_V report_date;

SELECT TO_CHAR(SYSDATE, 'YYYY-MM-DD"T"HH24-MI-SS') report_date FROM DUAL;

SPO /tmp/pdb_window_&&report_date..txt;

-- hour of the day (military format) when first maintenance window for a PDB may open during week days

-- i.e. if dbtimezone is UCT and we want to open 1st at 8AM PST we set to 15

VAR weekday_start_hh24 NUMBER;

EXEC :weekday_start_hh24 := 15;

-- for how many hours we want to open maintenance windows for PDBs during week days

-- i.e. if we want to open windows during a 2 hours interval we set to 2

VAR weekday_hours NUMBER;

EXEC :weekday_hours := 2;

-- how long we want the maintenance window to last for each PDB during week days

-- i.e. if we want each PDB to have a window of 4 hours then we set to 4

VAR weekday_duration NUMBER;

EXEC :weekday_duration := 4;

-- hour of the day (military format) when first maintenance window for a PDB may open during weekends

-- i.e. if dbtimezone is UCT and we want to open 1st at 8AM PST we set to 15

VAR weekend_start_hh24 NUMBER;

EXEC :weekend_start_hh24 := 15;

-- for how many hours we want to open maintenance windows for PDBs during weekends

-- i.e. if we want to open windows for 2 hours interval we set to 2

VAR weekend_hours NUMBER;

EXEC :weekend_hours := 2;

-- how long we want the maintenance window to last for each PDB during weekends

-- i.e. if we want each PDB to have a window of 8 hours then we set to 8

VAR weekend_duration NUMBER;

EXEC :weekend_duration := 8;

-- PL/SQL block to be executed on each PDB

VAR v_cursor CLOB;

BEGIN

:v_cursor := q'[

DECLARE

PRAGMA AUTONOMOUS_TRANSACTION;

BEGIN

DBMS_SCHEDULER.SET_SCHEDULER_ATTRIBUTE('DEFAULT_TIMEZONE','+00:00');

DBMS_SCHEDULER.DISABLE('MONDAY_WINDOW', TRUE);

DBMS_SCHEDULER.SET_ATTRIBUTE('MONDAY_WINDOW', 'repeat_interval', 'freq=daily; byday=MON; byhour='||:b_weekdays_hh24||'; byminute='||:b_weekdays_mi||'; bysecond='||:b_weekdays_ss);

DBMS_SCHEDULER.SET_ATTRIBUTE('MONDAY_WINDOW', 'duration', TO_DSINTERVAL('+000 '||LPAD(:b_weekday_duration, 2, '0')||':00:00'));

DBMS_SCHEDULER.SET_ATTRIBUTE_NULL('MONDAY_WINDOW', 'resource_plan');

DBMS_SCHEDULER.ENABLE('MONDAY_WINDOW');

DBMS_SCHEDULER.DISABLE('TUESDAY_WINDOW', TRUE);

DBMS_SCHEDULER.SET_ATTRIBUTE('TUESDAY_WINDOW', 'repeat_interval', 'freq=daily; byday=TUE; byhour='||:b_weekdays_hh24||'; byminute='||:b_weekdays_mi||'; bysecond='||:b_weekdays_ss);

DBMS_SCHEDULER.SET_ATTRIBUTE('TUESDAY_WINDOW', 'duration', TO_DSINTERVAL('+000 '||LPAD(:b_weekday_duration, 2, '0')||':00:00'));

DBMS_SCHEDULER.SET_ATTRIBUTE_NULL('TUESDAY_WINDOW', 'resource_plan');

DBMS_SCHEDULER.ENABLE('TUESDAY_WINDOW');

DBMS_SCHEDULER.DISABLE('WEDNESDAY_WINDOW', TRUE);

DBMS_SCHEDULER.SET_ATTRIBUTE('WEDNESDAY_WINDOW', 'repeat_interval', 'freq=daily; byday=WED; byhour='||:b_weekdays_hh24||'; byminute='||:b_weekdays_mi||'; bysecond='||:b_weekdays_ss);

DBMS_SCHEDULER.SET_ATTRIBUTE('WEDNESDAY_WINDOW', 'duration', TO_DSINTERVAL('+000 '||LPAD(:b_weekday_duration, 2, '0')||':00:00'));

DBMS_SCHEDULER.SET_ATTRIBUTE_NULL('WEDNESDAY_WINDOW', 'resource_plan');

DBMS_SCHEDULER.ENABLE('WEDNESDAY_WINDOW');

DBMS_SCHEDULER.DISABLE('THURSDAY_WINDOW', TRUE);

DBMS_SCHEDULER.SET_ATTRIBUTE('THURSDAY_WINDOW', 'repeat_interval', 'freq=daily; byday=THU; byhour='||:b_weekdays_hh24||'; byminute='||:b_weekdays_mi||'; bysecond='||:b_weekdays_ss);

DBMS_SCHEDULER.SET_ATTRIBUTE('THURSDAY_WINDOW', 'duration', TO_DSINTERVAL('+000 '||LPAD(:b_weekday_duration, 2, '0')||':00:00'));

DBMS_SCHEDULER.SET_ATTRIBUTE_NULL('THURSDAY_WINDOW', 'resource_plan');

DBMS_SCHEDULER.ENABLE('THURSDAY_WINDOW');

DBMS_SCHEDULER.DISABLE('FRIDAY_WINDOW', TRUE);

DBMS_SCHEDULER.SET_ATTRIBUTE('FRIDAY_WINDOW', 'repeat_interval', 'freq=daily; byday=FRI; byhour='||:b_weekdays_hh24||'; byminute='||:b_weekdays_mi||'; bysecond='||:b_weekdays_ss);

DBMS_SCHEDULER.SET_ATTRIBUTE('FRIDAY_WINDOW', 'duration', TO_DSINTERVAL('+000 '||LPAD(:b_weekday_duration, 2, '0')||':00:00'));

DBMS_SCHEDULER.SET_ATTRIBUTE_NULL('FRIDAY_WINDOW', 'resource_plan');

DBMS_SCHEDULER.ENABLE('FRIDAY_WINDOW');

DBMS_SCHEDULER.DISABLE('SATURDAY_WINDOW', TRUE);

DBMS_SCHEDULER.SET_ATTRIBUTE('SATURDAY_WINDOW', 'repeat_interval', 'freq=daily; byday=SAT; byhour='||:b_weekends_hh24||'; byminute='||:b_weekends_mi||'; bysecond='||:b_weekends_ss);

DBMS_SCHEDULER.SET_ATTRIBUTE('SATURDAY_WINDOW', 'duration', TO_DSINTERVAL('+000 '||LPAD(:b_weekend_duration, 2, '0')||':00:00'));

DBMS_SCHEDULER.SET_ATTRIBUTE_NULL('SATURDAY_WINDOW', 'resource_plan');

DBMS_SCHEDULER.ENABLE('SATURDAY_WINDOW');

DBMS_SCHEDULER.DISABLE('SUNDAY_WINDOW', TRUE);

DBMS_SCHEDULER.SET_ATTRIBUTE('SUNDAY_WINDOW', 'repeat_interval', 'freq=daily; byday=SUN; byhour='||:b_weekends_hh24||'; byminute='||:b_weekends_mi||'; bysecond='||:b_weekends_ss);

DBMS_SCHEDULER.SET_ATTRIBUTE('SUNDAY_WINDOW', 'duration', TO_DSINTERVAL('+000 '||LPAD(:b_weekend_duration, 2, '0')||':00:00'));

DBMS_SCHEDULER.SET_ATTRIBUTE_NULL('SUNDAY_WINDOW', 'resource_plan');

DBMS_SCHEDULER.ENABLE('SUNDAY_WINDOW');

-- Weeknight window - for compatibility only - KEEP IT DISABLED

DBMS_SCHEDULER.DISABLE('WEEKNIGHT_WINDOW', TRUE);

DBMS_SCHEDULER.SET_ATTRIBUTE('WEEKNIGHT_WINDOW', 'repeat_interval', 'freq=daily; byday=MON,TUE,WED,THU,FRI; byhour='||:b_weekdays_hh24||'; byminute='||:b_weekdays_mi||'; bysecond='||:b_weekdays_ss);

DBMS_SCHEDULER.SET_ATTRIBUTE('WEEKNIGHT_WINDOW', 'duration', TO_DSINTERVAL('+000 '||LPAD(:b_weekday_duration, 2, '0')||':00:00'));

--DBMS_SCHEDULER.ENABLE('WEEKNIGHT_WINDOW'); KEEP IT DISABLED - Weeknight window - for compatibility only

-- Weekend window - for compatibility only - KEEP IT DISABLED

DBMS_SCHEDULER.DISABLE('WEEKEND_WINDOW', TRUE);

DBMS_SCHEDULER.SET_ATTRIBUTE('WEEKEND_WINDOW', 'repeat_interval', 'freq=daily; byday=SAT,SUN; byhour='||:b_weekends_hh24||'; byminute='||:b_weekends_mi||'; bysecond='||:b_weekends_ss);

DBMS_SCHEDULER.SET_ATTRIBUTE('WEEKEND_WINDOW', 'duration', TO_DSINTERVAL('+000 '||LPAD(:b_weekend_duration, 2, '0')||':00:00'));

--DBMS_SCHEDULER.ENABLE('WEEKEND_WINDOW'); KEEP IT DISABLED - Weekend window - for compatibility only

COMMIT;

END;

]';

END;

/

PRINT v_cursor;

DECLARE

l_cursor_id INTEGER;

l_rows_processed INTEGER;

BEGIN

l_cursor_id := DBMS_SQL.OPEN_CURSOR;

FOR i IN (WITH

pdbs AS ( -- list of PDBs ordered by CON_ID with enumerator as rank_num

SELECT con_id, name, RANK () OVER (ORDER BY con_id) rank_num FROM v$containers WHERE con_id <> 2

),

slot AS ( -- PDBs count

SELECT MAX(rank_num) count FROM pdbs

),

start_time AS (

SELECT con_id, name,

(TRUNC(SYSDATE) + (:weekday_start_hh24 / 24) + ((pdbs.rank_num - 1) * :weekday_hours / (slot.count - 1) / 24)) weekdays,

(TRUNC(SYSDATE) + (:weekend_start_hh24 / 24) + ((pdbs.rank_num - 1) * :weekend_hours / (slot.count - 1) / 24)) weekends

FROM pdbs, slot

WHERE slot.count > 1

)

SELECT con_id, name,

TO_CHAR(weekdays, 'HH24') weekdays_hh24, TO_CHAR(weekdays, 'MI') weekdays_mi, TO_CHAR(weekdays, 'SS') weekdays_ss,

TO_CHAR(weekends, 'HH24') weekends_hh24, TO_CHAR(weekends, 'MI') weekends_mi, TO_CHAR(weekends, 'SS') weekends_ss

FROM start_time

ORDER BY

con_id)

LOOP

DBMS_OUTPUT.PUT_LINE('PDB:'||i.name||' weekdays:'||i.weekdays_hh24||':'||i.weekdays_mi||':'||i.weekdays_ss||' duration:'||:weekday_duration||'h weekends:'||i.weekends_hh24||':'||i.weekends_mi||':'||i.weekends_ss||' duration:'||:weekend_duration||'h');

DBMS_SQL.PARSE(c => l_cursor_id, statement => :v_cursor, language_flag => DBMS_SQL.NATIVE, container => i.name);

DBMS_SQL.BIND_VARIABLE(c => l_cursor_id, name => 'b_weekdays_hh24', value => i.weekdays_hh24);

DBMS_SQL.BIND_VARIABLE(c => l_cursor_id, name => 'b_weekdays_mi', value => i.weekdays_mi);

DBMS_SQL.BIND_VARIABLE(c => l_cursor_id, name => 'b_weekdays_ss', value => i.weekdays_ss);

DBMS_SQL.BIND_VARIABLE(c => l_cursor_id, name => 'b_weekday_duration', value => :weekday_duration);

DBMS_SQL.BIND_VARIABLE(c => l_cursor_id, name => 'b_weekends_hh24', value => i.weekends_hh24);

DBMS_SQL.BIND_VARIABLE(c => l_cursor_id, name => 'b_weekends_mi', value => i.weekends_mi);

DBMS_SQL.BIND_VARIABLE(c => l_cursor_id, name => 'b_weekends_ss', value => i.weekends_ss);

DBMS_SQL.BIND_VARIABLE(c => l_cursor_id, name => 'b_weekend_duration', value => :weekend_duration);

l_rows_processed := DBMS_SQL.EXECUTE(c => l_cursor_id);

END LOOP;

DBMS_SQL.CLOSE_CURSOR(c => l_cursor_id);

END;

/

SPO OFF;

<pre>

How to execute some SQL in all Pluggable Databases (PDBs)

If you are on 12c (and you should) and your database is truly multitenant, you may be in need to execute some SQL in all PDBs. OEM is awesome when it comes to executing a Job in a set of databases, and if such Job is a SQL Script then you can write it to do the same SQL in each PDB out of the set, as long as it is not a Standby. I have seen SQL Scripts doing that, making use of dynamic SQL and generating “ALTER SESSION SET CONTAINER = xxx” commands, spooled to a text file and executing such text file trusting its content. This approach works fine but is not very clean, and opens the door to some issues about the spooled file. I won’t get into the details, but one is security…

In order to avoid using a spool file with dynamic SQL, and having the parent script trust that such dynamic script is indeed available and legit, what I am proposing here is the use of oldie DBMS_SQL, and still do dynamic SQL but self contained. Look at sample code below, this script simply enables those tasks out of the Auto Task set provided by Oracle. Of course this script below assumes that someone has disabled one or more of the three tasks in some PDBs… or maybe in all of them, and that some Databases out of the farm may have the same issue… So if in this example we just want to enable all 3 tasks in all PDBs for all databases in farm, then we could schedule sample script as an OEM Job, and execute it every once in a while.

Anyways, enjoy the sample script and consider using it for other purposes. Nothing new, but just a simple and clean case of using DBMS_SQL on multitenant, while avoiding having to execute a script generated by another script and all the headaches that such action may cause when something goes wrong.

COL report_date NEW_V report_date;

SELECT TO_CHAR(SYSDATE, 'YYYY-MM-DD"T"HH24-MI-SS') report_date FROM DUAL;

SPO /tmp/change_all_pdbs_&&report_date..txt;

VAR v_cursor CLOB;

BEGIN

:v_cursor := q'[

DECLARE

PRAGMA AUTONOMOUS_TRANSACTION;

BEGIN

DBMS_OUTPUT.PUT_LINE('ENABLE DBMS_AUTO_TASK_ADMIN');

DBMS_AUTO_TASK_ADMIN.ENABLE;

FOR i IN (SELECT client_name, operation_name

FROM dba_autotask_operation

WHERE status = 'DISABLED'

ORDER BY 1, 2)

LOOP

DBMS_OUTPUT.PUT_LINE('ENABLE CLIENT_NAME:'||i.client_name||' OPERATION:'||i.operation_name);

DBMS_AUTO_TASK_ADMIN.ENABLE

( client_name => i.client_name

, operation => NULL

, window_name => NULL

);

END LOOP;

COMMIT;

END;

]';

END;

/

PRINT v_cursor;

SET SERVEROUTPUT ON

DECLARE

l_cursor_id INTEGER;

l_rows_processed INTEGER;

BEGIN

l_cursor_id := DBMS_SQL.OPEN_CURSOR;

FOR i IN (SELECT name

FROM v$containers

WHERE con_id > 2

AND open_mode = 'READ WRITE'

ORDER BY 1)

LOOP

DBMS_OUTPUT.PUT_LINE('PDB:'||i.name);

DBMS_SQL.PARSE

( c => l_cursor_id

, statement => :v_cursor

, language_flag => DBMS_SQL.NATIVE

, container => i.name

);

l_rows_processed := DBMS_SQL.EXECUTE(c => l_cursor_id);

END LOOP;

DBMS_SQL.CLOSE_CURSOR(c => l_cursor_id);

END;

/

SPO OFF;